EU AI Act Compliance Guide: What Insurance, Fintech, and Healthcare Companies Must Do Before August 2026

Want to know more? — Subscribe

August 2, 2026. That's the date the EU AI Act's high-risk provisions become enforceable. If you're building or deploying AI systems in insurance, fintech, or healthcare — that's your deadline. Not a suggestion. Not a "we should probably start thinking about this" kind of date. An actual regulatory wall.

And here's the thing: most companies I talk to know the EU AI Act exists. They've read a headline or two. Maybe someone on the legal team forwarded an article last year. But almost nobody has started building toward EU AI Act compliance in any meaningful way. The gap between awareness and action is enormous.

Frankly, most compliance guides don't help. They're written by lawyers for lawyers — 80 pages of regulatory language that no engineering team can translate into a Jira ticket. This one is different. I've spent the last year working with engineering teams deploying AI in regulated industries, and this guide is what I wish someone had handed me at the start.

EUR 35M

Maximum penalty — or 7% of global annual turnover, whichever is higher. Nearly double GDPR's maximum.

Whether you're a CTO, VP of Engineering, Product Lead, or Compliance Officer — this is the actionable EU AI Act compliance checklist your team needs right now. Not next quarter. Now.

What Is the EU AI Act and Why Should You Care Now?

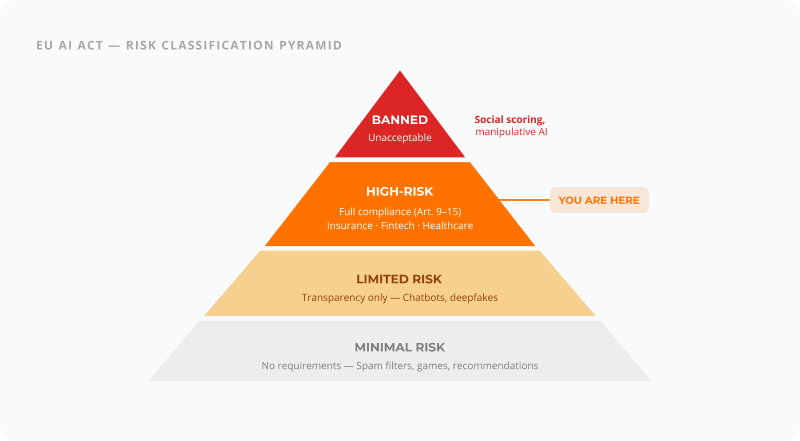

The EU AI Act is the world's first comprehensive legal framework for artificial intelligence, creating a four-tier risk classification system with binding requirements for high-risk AI systems in insurance, fintech, and healthcare. High-risk provisions become enforceable August 2, 2026.

Skip the "first-ever comprehensive AI regulation" preamble — you've heard it. What matters is this: the EU AI Act creates a legal classification system for AI. Depending on what your system does and who it affects, you'll face different requirements. Think of it like building codes for software. A garden shed has different rules than a hospital.

The part that matters to most AI teams in regulated industries is the high-risk category. That's where insurance, fintech, and healthcare AI systems almost always land. And the high-risk requirements aren't light. If your team needs hands-on support, our EU AI Act compliance services cover the full scope — from risk classification to conformity assessment.

The Timeline — Key Dates You Actually Need

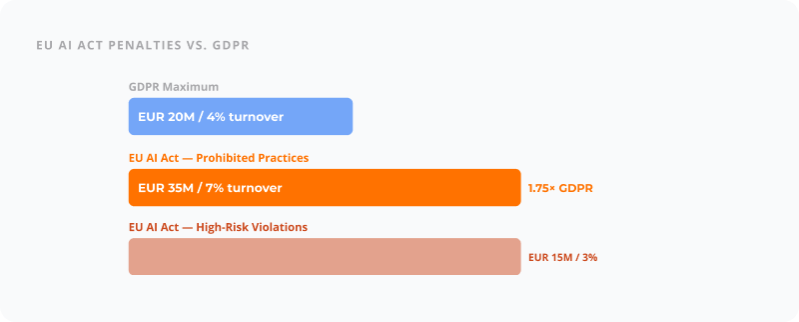

The Penalties Are Real

For a mid-market company doing EUR 100 million in revenue, a high-risk violation could mean a EUR 3 million fine. That's not a rounding error on your P&L.

The EU has already established the European AI Office to oversee enforcement, and national competent authorities are being set up across member states. The enforcement infrastructure is being built in parallel with the compliance deadlines.

The EU AI Act's maximum penalty of EUR 35 million or 7% of global turnover is nearly double GDPR's maximum fine.

Is Your AI System "High-Risk"? Here's How to Tell

If your AI system makes or influences decisions about people's credit, insurance coverage, or healthcare, it is almost certainly classified as high-risk under Annex III of the EU AI Act. Credit scoring, claims processing, medical diagnostics, underwriting, and loan approval are all explicitly listed.

If you're deploying AI that makes or influences decisions about people's money, health, or insurance — it's almost certainly high-risk.

The Four Risk Tiers

Annex III — Where Your Industry Falls

Annex III of the EU AI Act lists the specific use cases classified as high-risk. Here's where it gets concrete.

| Industry | AI Use Case | Annex III | Classification |

|---|---|---|---|

| Insurance | Claims assessment & processing | 5(b) | High-risk |

| Insurance | Premium pricing algorithms | 5(b) | High-risk |

| Insurance | Fraud detection | 5(b) | High-risk |

| Insurance | Underwriting automation | 5(b) | High-risk |

| Fintech | Credit scoring | 5(b) | High-risk |

| Fintech | Loan approval/denial | 5(b) | High-risk |

| Fintech | KYC/AML screening | 5(b) | High-risk |

| Healthcare | Diagnostic imaging AI | 1 (MDR) | High-risk |

| Healthcare | Clinical decision support | 1 (MDR) | High-risk |

| Healthcare | Triage & prioritization | 5(b)/1 | High-risk |

| Healthcare | Drug interaction prediction | 1 (MDR) | High-risk |

If your system shows up on this table — and if you're in one of these industries deploying AI, it probably does — keep reading.

All AI systems used for credit scoring, insurance claims, medical diagnostics, and loan approval are classified as high-risk under EU AI Act Annex III.

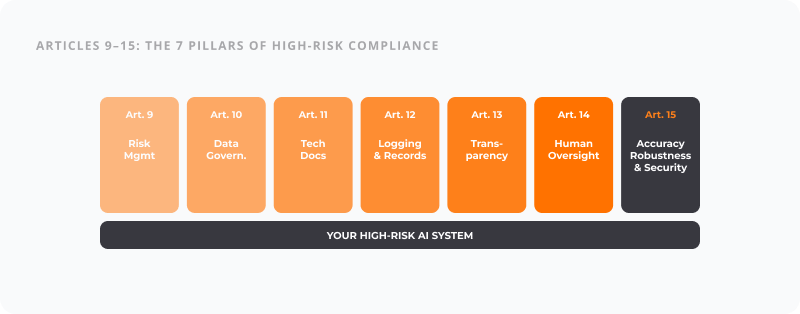

The 7 Things Your AI System Must Have by August 2026

Articles 9 through 15 lay out what every high-risk AI system needs. Here's each one translated from legal language to engineering specs.

| # | EU AI Act Requirement | Article | What It Means for Engineering |

|---|---|---|---|

| 1 | Risk Management | Art. 9 | Ongoing, documented risk process tied to model lifecycle |

| 2 | Data Governance | Art. 10 | Data lineage, bias testing, quality monitoring for all training data |

| 3 | Technical Documentation | Art. 11 | Auto-generated system docs, model cards, validation results |

| 4 | Record-Keeping & Logging | Art. 12 | Immutable decision-level audit trails, 5–10 year retention |

| 5 | Transparency | Art. 13 | Explainability layers (SHAP/LIME), user-facing explanations |

| 6 | Human Oversight | Art. 14 | Override mechanisms, kill switches, escalation workflows |

| 7 | Accuracy & Security | Art. 15 | Adversarial testing, drift monitoring, fallback mechanisms |

1. Risk Management System

Ongoing, documented risk management throughout the AI lifecycle

What the law says

An ongoing, documented risk management process that identifies, analyzes, evaluates, and mitigates risks throughout the AI system's lifecycle. Not a one-time risk assessment — a living system.

What to build

A risk registry connected to your model lifecycle. Automated risk scoring tied to model versions. Residual risk documentation. Testing protocols targeting identified risks. Feedback loops from production back to risk evaluation.

In practice — Insurance

Your fraud detection model flags claims for review. The risk management system tracks false positive rates by demographic group, monitors for concept drift monthly, and triggers automatic alerts when the model's risk profile changes.

2. Data Governance

Training, validation, and testing datasets must be relevant, representative, and documented

What the law says

Documented data governance covering collection processes, data preparation, assumptions, bias assessment, and gap identification. Datasets must be free of errors to the extent possible.

What to build

Data lineage tracking from source to model input. Bias detection pipelines (statistical parity, equalized odds). Data quality dashboards with automated anomaly detection. Versioned documentation alongside model artifacts.

In practice — Fintech

Your credit scoring model trains on five years of loan data. Data governance means documenting that 2020–2021 data has COVID-era anomalies, certain regions are underrepresented, and bias audits show no discrimination by protected characteristics.

3. Technical Documentation

Complete technical documentation before market placement, kept continuously updated

What the law says

Complete technical documentation proving your system meets all requirements, drawn up before the system is placed on the market and kept up to date.

What to build

System design documents covering architecture, data flows, and decision logic. Model cards for every ML model. Training methodology docs. Validation results with clear pass/fail criteria. Auto-generated docs from code annotations and MLOps pipeline metadata.

In practice — Fintech

Your diagnostic AI for lung nodules: documentation includes model architecture, training data demographics, performance across scanner types, known failure modes (lower sensitivity on portable X-rays), and validation against clinical benchmarks. Regenerated every release.

4. Record-Keeping and Logging

Automatic logging for risk identification, post-market monitoring, and traceability

What the law says

Automatic logging capabilities recording events relevant to identifying risks, facilitating post-market monitoring, and enabling traceability.

What to build

Every prediction recorded with input data, model version, confidence score, and output. Immutable audit logs (append-only, tamper-evident). 5–10 year retention. Query capabilities so regulators can reconstruct any decision.

In practice — Fintech

A customer disputes a claim denial. You pull the audit log showing: this model version processed these inputs, generated this confidence score, triggered this decision pathway, and a human reviewer saw it on this date.

5. Transparency and User Information

Sufficiently transparent design enabling users to interpret and use output appropriately

What the law says

High-risk AI must be transparent enough to enable users to interpret and use the output appropriately. Instructions for use must be provided.

What to build

Explainability layers — SHAP, LIME, attention visualization, or counterfactual explanations. User-facing explanations for non-technical people. Clear capability/limitation documentation. Confidence indicators on outputs.

In practice — Fintech

A loan applicant receives a rejection. The system provides: "The primary factors were: debt-to-income ratio (35% weight), length of credit history (25%), and recent credit inquiries (20%)." Not just a score — an explanation.

6. Human Oversight

Effective human oversight including understanding, monitoring, and intervention capabilities

What the law says

Designed to allow effective human oversight — ability to understand capabilities, monitor operation, and intervene or override decisions.

What to build

Override mechanisms for authorized users. Anomaly/drift dashboards. Configurable automation levels (full auto, human-in-the-loop, human-on-the-loop). Kill switches. Escalation workflows below confidence threshold.

In practice — Fintech

Your CDS flags a potential diagnosis. The clinician can accept, modify, or reject. Below 70% confidence, it auto-routes to a senior specialist. A red button takes the system offline if dangerous patterns emerge.

7. Accuracy, Robustness, and Cybersecurity

Appropriate levels of accuracy, robustness, and cybersecurity throughout the lifecycle

What the law says

Appropriate accuracy, robustness, and cybersecurity throughout the lifecycle, including resilience against errors, faults, and exploitation attempts.

What to build

Continuous performance monitoring with regression detection. Adversarial testing and red-teaming in release process. Data poisoning detection. Robustness testing. Encryption, access controls, vulnerability scanning. Fallback mechanisms.

In practice — Fintech

Your pricing model gets tested against adversarial inputs. Monthly distribution shift analyses compare production data to training data. Unknown input patterns get flagged for manual review instead of producing potentially wrong prices.

Your Industry Playbook

General requirements are one thing. Here's how EU AI Act compliance actually plays out in your specific industry.

Insurance — What Changes for You

Insurance has been using actuarial models forever. What's new is that AI-driven models are now subject to explicit regulatory requirements that traditional statistical models weren't built to meet.

Top 5 engineering priorities:

Explainability for claims decisions — Every claim decision needs to be explainable to the policyholder and the regulator. Build SHAP or equivalent explanation layers now.

Bias auditing on pricing models — The EU AI Act explicitly targets discriminatory outcomes. Run fairness metrics across protected characteristics on every model update.

Audit trail for underwriting — Immutable, queryable logs that reconstruct any underwriting decision going back years.

Human override on high-value claims — High-value or disputed claims need clear human-in-the-loop mechanisms.

Continuous monitoring for drift — Insurance data shifts with market cycles, catastrophic events, and regulatory changes.

Regulatory overlap: Solvency II (capital requirements, risk management) and Insurance Distribution Directive (IDD — transparency). Significant overlap — a solid risk management system covers ground across all three.

Fintech — What Changes for You

Fintech companies have been building on top of regulatory frameworks for years — MiFID II, PSD2, AML directives. The EU AI Act adds a new layer specifically about the AI itself, not just the financial product.

Top 5 engineering priorities:

Right to explanation for credit decisions — Non-negotiable. If AI influences whether someone gets a loan, they need to understand why.

Data governance for training datasets — Financial data carries historical biases. Document them, mitigate them, prove you've done both.

Model risk management framework — Align with Article 9 while maintaining your existing model validation processes. Don't build two systems — integrate.

Real-time monitoring for AML systems — A missed suspicious transaction due to model drift is now a compliance failure under two frameworks.

Access controls and cybersecurity — Financial AI systems are high-value targets. Article 15 aligns with DORA demands.

Regulatory overlap: MiFID II, PSD2, AMLD, and DORA alongside the EU AI Act. Silver lining: DORA's operational resilience requirements overlap substantially. Build once, document for both.

Healthcare — What Changes for You

Healthcare AI sits at the intersection of two heavy regulatory regimes: the EU AI Act and the Medical Devices Regulation (MDR) / In Vitro Diagnostic Regulation (IVDR). If your AI qualifies as a medical device — and many do — you're dealing with both.

Top 5 engineering priorities:

Clinical validation documentation — Not just ML metrics. Clinical efficacy evidence that meets both MDR and EU AI Act standards. Prospective studies required.

Patient-facing transparency — When AI influences diagnosis or treatment, the patient and clinician must understand AI's role.

Failsafe mechanisms — Healthcare AI errors can cause physical harm. Build aggressive fallback mechanisms.

Data governance for health data — GDPR special category data on top of AI Act governance. Double the documentation, double the protection.

Human oversight in clinical workflows — Not a dashboard nobody checks. Woven into workflows where clinicians naturally interact.

Regulatory overlap: MDR/IVDR EU AI Act conformity assessments overlap heavily with the EU AI Act's requirements. CE-marked medical devices are partway there — but the AI Act adds data governance and continuous monitoring requirements MDR doesn't cover as explicitly. GDPR Article 9 adds another layer.

| Requirement | Priority | Deadline |

|---|---|---|

| Map all AI systems to Annex III categories | Critical | Q1 2026 |

| Implement explainability layer (SHAP/LIME) for claims AI | Critical | Q2 2026 |

| Build immutable audit trail for underwriting decisions | Critical | Q2 2026 |

| Run bias audit across all pricing and scoring models | High | Q2 2026 |

| Deploy human oversight dashboard with override | High | Q2 2026 |

| Generate technical documentation for all high-risk systems | High | Q2 2026 |

| Establish continuous monitoring and drift detection | High | Q3 2026 |

| Align risk management with Solvency II framework | Medium | Q3 2026 |

| Train operations staff on AI oversight | Medium | Q3 2026 |

| Conduct conformity assessment | Critical | July 2026 |

Build Compliant from Day One — Don't Retrofit

Retrofitting EU AI Act compliance into an existing AI system costs 3-5x more than building it in from the start — a pattern consistent with why most AI agent projects fail.

Building AI compliance after the system is already in production is like adding a fire escape to a building that's already occupied. You can do it. But it's expensive, disruptive, and everyone's going to have a bad time for months.

3–5×

The cost multiplier when retrofitting compliance into an existing AI system vs. building it in from the start.

The architecture pattern that works is four layers built in from the start:

Governance Layer

Risk management, compliance configuration, policy enforcement

Audit Layer

Decision logging, data lineage, immutable record-keeping

Explainability Layer

Model-level and decision-level explanations, bias monitoring

Human Oversight Layer

Review interfaces, override mechanisms, escalation workflows

6-Month Compliance Roadmap: March to August 2026

March 2026 — Discovery & Classification

Inventory all AI systems. Classify each under Annex III risk tiers. Identify regulatory overlaps (MDR, DORA, Solvency II). Gap analysis against Articles 9–15.

April 2026 — Architecture & Data Governance

Design governance, audit, explainability, and oversight layers. Implement data lineage tracking. Begin technical documentation framework. Start bias audit on highest-risk models.

May 2026 — Core Implementation

Build audit trail and logging infrastructure. Implement explainability layers (SHAP, LIME, counterfactuals). Deploy human oversight interfaces. Set up continuous monitoring pipelines.

June 2026 — Integration & Testing

Integrate compliance layers with production systems. Run end-to-end testing of audit trails. Validate explainability outputs with domain experts. Stress-test human oversight workflows.

July 2026 — Conformity Assessment & Documentation

Complete technical documentation packages. Conduct conformity assessment (self or third-party). Train operations and compliance staff. Remediate any gaps.

August 2, 2026 — Go-Live & Monitoring

Compliance enforcement begins. Activate production monitoring dashboards. Begin post-market monitoring obligations. Establish incident reporting workflows.

When You Need an Engineering Partner

Some organizations can handle this internally. But be honest with yourself about capacity. You probably need external help if:

- Your AI systems were built without compliance in mind and need significant architectural changes

- Your engineering team is at capacity and can't absorb a 6-month compliance sprint

- You're deploying across multiple regulatory jurisdictions (EU AI Act + MDR + DORA + GDPR)

- You need to stand up monitoring, explainability, and audit infrastructure from scratch

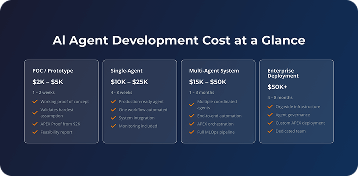

This is the kind of work we do at Softermii. Our AI development team has been deploying AI systems in regulated industries since 2014, and our APEX platform was built with governance layers, audit trails, human oversight mechanisms, and bias testing as core architectural components — not add-ons.

We guarantee no more than 15% deviation on scope and timeline. That matters when you're working toward a regulatory deadline that doesn't move. See our AI agent development cost breakdown for detailed pricing on compliance-ready builds.

Frequently Asked Questions

When does the EU AI Act take effect?

The EU AI Act entered into force on August 1, 2024, with a phased rollout. Prohibited AI practices were banned from February 2, 2025. General-purpose AI rules apply from August 2, 2025. The most impactful deadline — high-risk AI system obligations — takes full effect on August 2, 2026.

What are the penalties for EU AI Act non-compliance?

Penalties under the EU AI Act reach EUR 35 million or 7% of global annual turnover for prohibited practices, EUR 15 million or 3% for high-risk system violations, and EUR 7.5 million or 1% for providing incorrect information to authorities. These exceed GDPR's maximum penalties significantly.

Is my AI system considered high-risk under the EU AI Act?

If your AI system makes or influences decisions about credit, insurance, healthcare, employment, or law enforcement, it's almost certainly high-risk under Annex III. Credit scoring, claims processing, medical diagnostics, and loan approval are explicitly listed. Check your use case against Annex III categories.

Does the EU AI Act apply to companies outside the EU?

Yes. The EU AI Act applies to any company that places AI systems on the EU market or whose AI system outputs are used within the EU, regardless of where the company is headquartered. This extraterritorial scope mirrors GDPR's approach and affects global companies serving EU customers.

What documentation is required for high-risk AI systems?

High-risk AI systems require technical documentation covering system design, data governance practices, training methodologies, testing results, risk management measures, human oversight capabilities, and accuracy metrics. This documentation must be created before deployment and kept continuously updated throughout the system's lifecycle.

How does the EU AI Act affect AI in insurance?

Insurance AI for claims assessment, underwriting, pricing, and fraud detection is classified high-risk under Annex III Category 5(b). Insurers must implement explainability layers, bias testing, decision audit trails, and human oversight mechanisms. These requirements interact with existing Solvency II and IDD obligations.

How does the EU AI Act affect AI in fintech and banking?

AI used for credit scoring, loan approval, KYC/AML screening, and robo-advisory is high-risk under Annex III Category 5(b). Fintech companies must provide individual decision explanations, maintain data governance documentation, and ensure human override capability. These requirements layer on top of MiFID II, PSD2, and DORA.

How does the EU AI Act affect AI in healthcare?

Healthcare AI for diagnostics, clinical decision support, and triage is high-risk under Annex III Category 1 (medical devices) or Category 5(b). Compliance requires clinical validation, patient-facing transparency, robust failsafe mechanisms, and integration with Medical Devices Regulation (MDR) conformity processes.

What is a conformity assessment under the EU AI Act?

A conformity assessment is the process of verifying that a high-risk AI system meets all EU AI Act requirements before it can be placed on the market. Most high-risk AI systems can use internal self-assessment, but certain categories — including some medical devices and biometric systems — require third-party assessment by notified bodies.

Can I still use AI for credit scoring under the EU AI Act?

Yes, AI-based credit scoring remains fully legal under the EU AI Act. However, it's classified as high-risk, meaning you must implement risk management, data governance, explainability, human oversight, logging, and cybersecurity requirements. You'll also need to provide individual explanations to applicants about how the AI influenced their credit decision.

What Happens Next Is Up to You

You have about six months. The EU AI Act high-risk deadline doesn't negotiate, and it doesn't care about your product roadmap.

The question isn't whether to comply — it's whether you'll build compliance into your architecture or bolt it on later at 3–5x the cost. Every week you wait makes the retrofit more expensive and the deadline less forgiving.

Here's what I'd do if I were in your position: spend this week mapping your AI systems to Annex III. Just that. Know what you're dealing with. Then build the six-month plan working backward from August 2.

The companies that treat this as an engineering problem, not a legal problem, are the ones who'll get through August 2026 without scrambling.

How about to rate this article?

1 ratings • Avg 5 / 5

Written by: