Why Most AI Agent Projects Fail (And How to Be the Exception)

Want to know more? — Subscribe

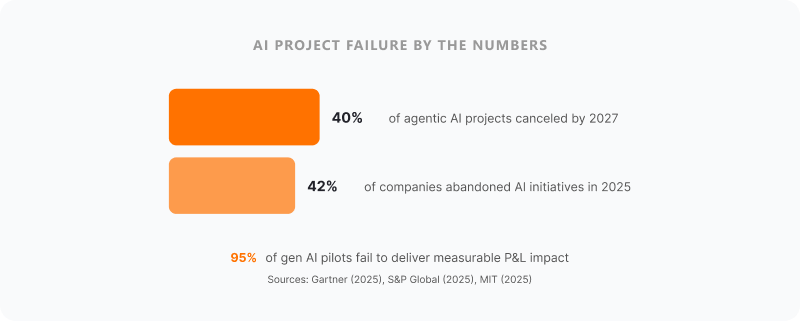

The failure numbers keep getting worse, not better.

Gartner predicted in June 2025 that over 40% of agentic AI projects will be canceled by end of 2027. S&P Global's 2025 enterprise survey found that 42% of companies abandoned most of their AI initiatives that year — up from 17% in 2024. And MIT's "State of AI in Business 2025" report delivered the most brutal data point yet: 95% of generative AI pilots at companies are failing to deliver measurable P&L impact.

These aren't experimental moonshot projects. These are companies that committed real money — five and sometimes six figures — to building AI agent systems they genuinely needed. Claims processing agents for insurance companies. KYC automation for banks. Dispatch optimization for logistics operators. Practical, high-value use cases with clear ROI on paper.

So what's going wrong?

We've built AI agent systems across insurance, fintech, logistics, and healthcare since 2014 at Softermii. Some projects shipped fast and delivered exactly what was promised. Others — and I'm being honest here — required us to rescue clients from partially-built systems that another team had abandoned. Across 100+ delivered projects, the patterns of failure are remarkably consistent.

This article is for CTOs, VPs of Engineering, and operations leaders at mid-market companies who are either planning their first AI agent project or trying to figure out why their current one is stuck. Agentic AI is the defining technology category of 2026 — the market hit approximately $7-8 billion in 2025 and is projected to reach $47-65 billion by 2030. Gartner predicts 33% of enterprise software will include agentic AI by 2028. The stakes for getting this right have never been higher.

Here are the seven failure patterns we see over and over, and how to avoid each one.

The 7 Reasons AI Agent Projects Fail

1. Starting with Technology, Not a Business Problem

This is the most common reason AI agent projects fail, and it's the most preventable.

A VP reads about multi-agent orchestration. The CTO gets excited about a new foundation model. Someone on the board mentions that competitors are "doing AI." So the team spins up a project: "Let's build an AI agent."

For what, exactly?

The question sounds obvious but it gets skipped constantly. RAND Corporation's research on AI project failures identified this as the number one root cause: teams and stakeholders have fundamentally different understandings of what the AI project is supposed to do. They start with "we should have AI agents" instead of "claims adjudicators spend 6 hours per day on tasks that follow a decision tree."

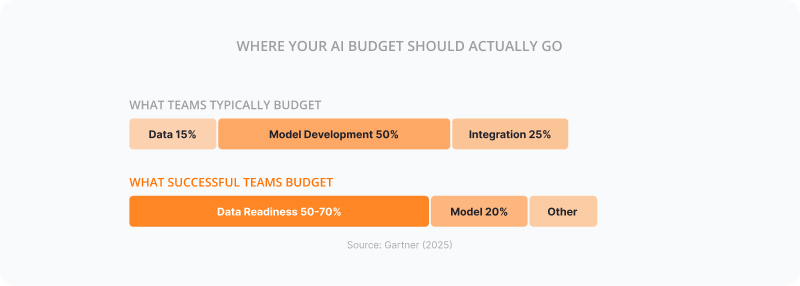

MIT's 2025 report uncovered a related problem — misallocation of budgets. Over half of generative AI budgets flow to sales and marketing tools, but the biggest measurable ROI comes from back-office automation: eliminating BPO, cutting external agency costs, streamlining operations. Companies are spending money where the demos are impressive, not where the dollars are.

#1

Root cause of AI failure: teams start with "we should have AI" instead of identifying a specific costly workflow to automate — RAND Corporation

The fix is almost embarrassingly simple. Start with the process, not the model. Map the workflow you want to automate. Identify where human judgment is genuinely needed versus where it's just habit. Calculate the actual cost of the current process. Then — and only then — ask whether an AI agent is the right solution.

Sometimes it isn't. Sometimes a rules engine or a simple API integration does the job for a tenth of the cost. That's fine. Better to find out before you've spent $50K on an agent that should have been an if-else statement.

2. Skipping the POC (or Building the Wrong One)

Companies fall into two traps here. The first is skipping the proof of concept entirely and going straight to a production build. "We know what we want, let's just build it." This is like furnishing a house before checking whether the foundation can hold the weight.

The second trap is worse, because it feels productive: building a POC that proves the easy part. Your team spends three weeks showing that an LLM can summarize a document. Great. Everyone's impressed. But the hard question was never whether the model can summarize — it was whether your agent can reliably extract structured data from 47 different insurance form formats, handle edge cases without hallucinating, and do it within your compliance requirements.

A good AI agent POC validates the hardest technical assumption, not the easiest. It should answer the question that keeps you up at night, not the one that makes a nice demo.

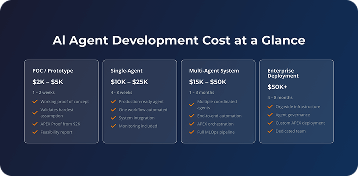

This is actually why we structured our APEX Proof tier the way we did — a working proof of concept in 5 days, starting from $2K, focused specifically on validating the riskiest assumption in your AI agent architecture. If the hard part doesn't work, you've lost five days and two thousand dollars instead of two months and fifty thousand.

3. Underestimating Data Work

Everyone wants to talk about models. Nobody wants to talk about data pipelines.

60%

of AI projects will be abandoned due to lack of AI-ready data by 2026. Winning programs allocate 50-70% of budget to data readiness. — Gartner

We worked with an insurance client who wanted to build an underwriting agent. Their historical policy data was spread across three systems, two of which used different field naming conventions, and one of which stored dates in four different formats within the same table. Fixing that took longer than building the agent itself.

The AI project failure rate is so high partly because teams budget for model development and integration work but treat data preparation as a minor preliminary step. It's not. Budget for it explicitly. Staff for it. Give it its own timeline. At Softermii, we handle data preparation as a dedicated workstream in every AI integration project — not an afterthought that gets squeezed into sprint zero.

4. Building Single-Agent When You Need Multi-Agent (and Vice Versa)

This one's more nuanced, but it causes a lot of expensive false starts.

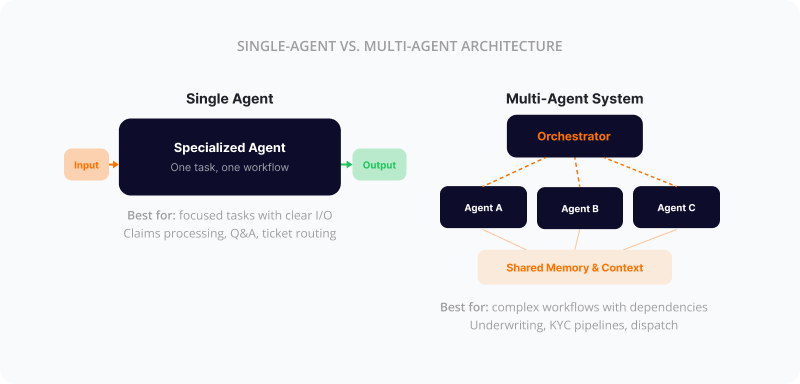

A single AI agent handles one task or workflow. Think of it as a very capable specialist — it processes claims, or it answers customer questions, or it routes support tickets. A multi-agent AI system is more like a team: multiple specialized agents coordinating with each other, passing information, making collective decisions.

| Mistake | What Happens | Real Cost |

|---|---|---|

| Over-engineering: building multi-agent when single-agent would suffice | Unnecessary complexity, longer build time, more failure points | 3-5x the budget of an appropriate solution |

| Under-engineering: building single-agent when the workflow requires coordination | Agent hits walls it can't get past, manual intervention still required | Full rebuild after 2-3 months |

| No architecture planning: letting the agent design emerge organically | Spaghetti agent topology, impossible to debug or scale | Technical debt that compounds monthly |

The decision framework isn't complicated. If your workflow has one primary task with clear inputs and outputs, start with a single agent. If it involves multiple steps where different types of reasoning or different data sources are needed, and those steps have dependencies — that's where multi-agent orchestration earns its complexity.

Most AI agent failures in this category come from over-engineering. Teams build elaborate multi-agent systems for problems that a well-designed single agent could handle. The architecture should match the problem's actual complexity, not the team's ambition.

This is where having a pre-built orchestration framework makes a real difference. APEX includes pre-built orchestration patterns, memory management, and agent communication — so if your project does require multi-agent coordination, you're not building that infrastructure from scratch. You're configuring it.

5. No Evaluation Framework

This might be the most dangerous failure pattern because it's invisible until production.

"If you can't measure it, you can't ship it." That sounds like a cliche, but in agentic AI implementation, it's literal. Teams build agents, run a few manual tests, see that it mostly works, and call it ready. Then the agent hallucinates a policy number in production. Or confidently gives a customer incorrect information about their coverage. Or makes a credit decision based on a misinterpreted data field.

S&P Global's 2025 enterprise survey revealed a troubling trend: the proportion of organizations citing positive impact from AI investments actually fell across every enterprise objective assessed, year-over-year. Revenue growth impact perception dropped from 81% to 76%. Cost management impact dropped from 79% to 74%. Organizations are spending more on AI but perceiving less value — largely because they lack the evaluation infrastructure to measure real outcomes and iterate.

What you need, before you even start building, is an evaluation framework that answers:

McKinsey's latest State of AI report found that only 6% of organizations are "AI high performers" attributing more than 5% of EBIT to AI. A big reason for that gap? The ones that did invest in evaluation infrastructure before they invested in production infrastructure.

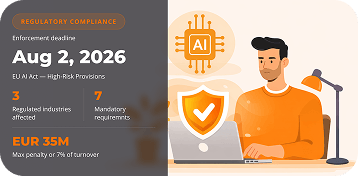

6. Ignoring Compliance Until It's Too Late

Here's a timeline that should concern anyone building AI agents in regulated industries: the EU AI Act's high-risk provisions take effect in August 2026. Prohibited AI practices are already banned (since February 2025). General-purpose AI model rules are already live (since August 2025). And the penalty structure is already enforceable — up to EUR 35 million or 7% of global annual turnover for violations.

If you're building AI agents for insurance, healthcare, or fintech — your systems almost certainly fall under the high-risk category. Which means transparency requirements, human oversight mandates, data governance standards, and documentation obligations that need to be baked into your architecture from day one.

The compliance costs are real. CEPS estimates that SMEs setting up a new Quality Management System for EU AI Act compliance face EUR 193,000-330,000 initially, with EUR 71,400 annually for maintenance. Large enterprises face $8-15M initial investment for high-risk systems.

The companies that are building compliance into their AI agent architecture now will spend a fraction of what companies that try to retrofit later will pay. Retrofitting compliance into an existing agentic AI system is 3-5x more expensive than building it in from the start.

If your AI agent development partner isn't asking about compliance in the first conversation, that should worry you. At Softermii, every AI agent project in regulated industries includes compliance architecture from the discovery phase — HIPAA for healthcare, SOC 2 for fintech, state regulations for insurance, and EU AI Act readiness for any system that makes decisions affecting people.

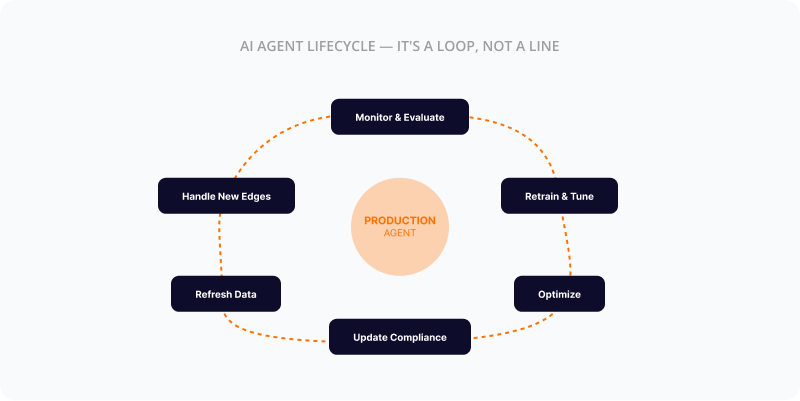

7. Treating AI Agents as a One-Time Build

This is where the analogy to traditional software breaks down, and teams that don't understand the difference end up with agents that degrade from useful to unreliable within months.

Traditional software does what you built it to do. If the requirements don't change, it works the same way on day one and day one thousand. AI agents don't work that way. Models drift. The data your agent was trained on stops reflecting current conditions. APIs that your agent depends on change their response formats. Business rules evolve. New edge cases emerge that your training data didn't cover.

An AI agent in production needs continuous monitoring, periodic retraining, and ongoing optimization. Budget 20-30% of your build cost annually for operations and evolution. That's not a luxury — it's the minimum to keep a production AI agent reliable.

The companies that treat AI agents as a one-time capital expenditure end up with the same agent six months later that's now giving worse answers than it did at launch. And nobody notices until a customer or a regulator points it out.

The Real Cost of a Failed AI Agent Project

The sticker price is painful enough. But the actual cost of a failed AI agent project extends well beyond what you spent on development.

| Project Size | Direct Build Cost | Opportunity Cost | Total Estimated Impact |

|---|---|---|---|

| Small POC / pilot | $5K-$20K | 2-3 months of team time, delayed competitive response | $15K-$60K |

| Mid-size production agent | $20K-$80K | 4-8 months, team demoralization, stakeholder trust erosion | $60K-$250K |

| Enterprise multi-agent system | $80K-$200K+ | 6-12+ months, strategic setback, potential regulatory exposure | $200K-$600K+ |

And there's the hidden cost that CIO.com quantified in 2025: 66.5% of organizations experience AI budget overruns, with first-year overruns typically running 30-40% over initial budget. AI implementation costs increased 89% between 2023 and 2025 (Glean), largely because teams underestimate the gap between a working prototype and a production system.

The numbers that don't show up on any spreadsheet are often the worst. When an AI agent project fails, the executive team loses confidence in AI as a category. The next legitimate AI opportunity gets dismissed as "we tried that." Engineers who worked on the failed project either leave or become resistant to future AI initiatives.

89%

increase in AI implementation costs from 2023 to 2025 — largely because teams underestimate the gap between prototype and production. — Glean

Honestly, the competitive damage might be the most significant. While you're recovering from a failed agentic AI implementation, your competitors are deploying theirs. In industries like insurance and logistics where AI agents can deliver 30-50% efficiency gains, a 12-month delay isn't just inconvenient — it's market share you're handing over.

A Framework for AI Agent Projects That Actually Ship

So how do you avoid being part of the 40% that Gartner says will be canceled? There's no magic formula, but there is a practical framework that dramatically improves your odds.

Start with the business case, not the technology

Define the specific workflow, calculate the current cost, estimate the realistic return. If the ROI doesn't clear your hurdle rate with conservative assumptions, stop here.

Validate with a focused POC

Test the hardest technical assumption in 5 days. Not a demo — a genuine test of whether the critical capability works with your actual data, in your actual environment. This is where staged approaches like APEX (Proof in 5 days, Build in 2 weeks, Evolve continuously, Scale when ready) reduce risk dramatically.

Build incrementally

Ship the minimum viable agent first. One task, one workflow, one user group. Get it into production, observe how it performs with real users and real data. Resist the urge to add capabilities before the core is solid.

Evaluate continuously

Implement automated evaluation from day one. Track accuracy, hallucination rates, edge case handling, and latency. Set thresholds that trigger alerts before users notice degradation.

Deploy with oversight

Human-in-the-loop for critical decisions, especially in regulated industries. Graceful fallback when the agent hits confidence thresholds. Comprehensive audit logging.

Evolve and optimize

Dedicated budget and team for ongoing model updates, data pipeline maintenance, performance optimization, and compliance updates. This isn't an afterthought — it's a permanent line item.

The companies that ship successful AI agent systems aren't necessarily smarter or better-funded. They're more disciplined about validating assumptions before committing resources.

What to Look for in an AI Agent Development Partner

If you're bringing in an external team — and for most mid-market companies, that's the right call given that MIT's 2025 data shows internal AI builds succeed only about 22% of the time versus 67% when purchasing from specialized vendors — here's what actually matters.

Domain Experience in Your Industry

An AI agent for insurance is fundamentally different from one for logistics. Ask for case studies in your vertical.

Staged Approach with Real Exit Points

Avoid six-month commitments before you've validated the core concept. Look for a POC phase with a genuine decision point.

Evaluation Methodology They Can Explain

How do they test for hallucinations? How do they measure accuracy? Vague answers = insufficient thinking.

Post-Launch Operations Capability

Building the agent is half the work. Can they support it in production? What happens when something breaks at 2 AM?

Transparent Pricing with Guarantees

Scope creep kills AI projects silently. Look for partners who commit to timelines and budgets contractually.

Proven Track Record Over Years

AI agent development is still new enough that many firms are learning on your dime. Verify claims with hard evidence.

At Softermii, we've been building custom software since 2014 and AI systems across 100+ projects — with a proprietary APEX framework specifically designed for staged AI agent delivery and a 4.9 Clutch rating across 34 reviews. But regardless of who you choose, ask hard questions and verify the answers.

Industry-Specific Considerations

Not all AI agent projects are equal. The failure patterns play out differently depending on your industry, and the ROI data is starting to tell a clear story.

Insurance

Claims processing, underwriting, fraud detection. The biggest trap: agents that work on standard claims but fall apart on complex, expensive ones.

210% ROI in 12 months — Anadolu Sigorta

Fintech

KYC/AML, credit scoring, onboarding. Every agent decision has immediate financial consequences and regulatory exposure. Explainability is mandatory.

80% reduction in KYC time — ABN AMRO

Healthcare

Clinical decision support, triage, admin automation. Highest accuracy bar — a hallucinating agent here is a patient safety issue. Human-in-the-loop mandatory.

–42% documentation time — AtlantiCare

Logistics

Dispatch optimization, carrier management, exception handling. One of the friendlier verticals — more structured data, faster feedback loops.

12% transport cost reduction — DHL

For deeper examples of how these play out in practice, our portfolio covers specific implementations across these verticals.

Frequently Asked Questions

Why do most AI agent projects fail?

Most AI agent projects fail because teams start with the technology instead of a clearly defined business problem. Combined with inadequate data preparation, missing evaluation frameworks, and treating AI as a one-time build rather than a continuous system, the result is pilots that never reach production. Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027, and S&P Global found 42% of companies abandoned most of their AI initiatives in 2025.

What is the success rate for AI agent implementations?

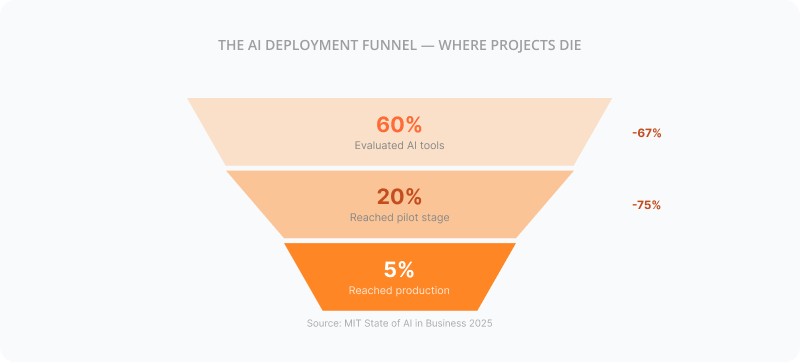

The success rate for AI agent implementations reaching production is low. MIT's 2025 report found that only 5% of organizations that evaluate AI tools reach production deployment. S&P Global found the average organization scrapped 46% of AI proof-of-concepts before production. Companies using staged approaches — validating with a focused proof of concept before committing to a full build — report significantly higher success rates than those that go straight to production builds.

How much does a failed AI agent project cost?

A failed AI agent project typically costs 2-4x the direct development budget when you factor in opportunity cost, team time, and competitive disadvantage. A mid-size production agent failure can run $60K-$250K in total impact, while enterprise multi-agent system failures can exceed $600K including strategic setbacks and regulatory exposure. CIO.com reported that 66.5% of organizations experience AI budget overruns, with costs increasing 89% between 2023 and 2025.

What is the difference between single-agent and multi-agent AI?

A single AI agent handles one specific task or workflow autonomously, like processing insurance claims or answering customer questions. A multi-agent AI system uses multiple specialized agents that coordinate and communicate with each other to handle complex workflows with interdependent steps. Choose single-agent for focused tasks and multi-agent when the workflow requires different types of reasoning across connected steps. Multi-agent is typically 3-5x the cost of single-agent, not 2x.

How long does it take to build an AI agent?

A focused AI agent proof of concept can be built in 5 days with a framework like APEX. A production-ready single-agent system typically takes 2-8 weeks. Complex multi-agent systems with full integration, testing, and compliance requirements take 3-6 months. The timeline depends heavily on data readiness — Gartner warns that organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026.

What is an AI agent proof of concept?

An AI agent proof of concept is a focused validation that tests whether the hardest technical assumption in your proposed system actually works with real data. Unlike a demo, a good POC deliberately targets the riskiest part of the project. It should cost a fraction of a full build — Softermii's APEX Proof starts from $2K and delivers in 5 days — and provide a clear go/no-go decision point before you commit serious resources.

How do you evaluate an AI agent's performance?

AI agent performance evaluation requires systematic testing across accuracy, hallucination rates, edge case handling, response latency, and consistency over time. Build automated evaluation pipelines that run against real-world data scenarios, not just curated test sets. Track performance degradation over time and set alert thresholds. In regulated industries, evaluation must also cover explainability and compliance requirements.

Do AI agents need ongoing maintenance after deployment?

Yes. AI agents require continuous maintenance including monitoring for performance degradation, periodic retraining as data and conditions change, API and integration updates, and compliance adjustments. Budget 20-30% of your initial build cost annually for operations. Models drift, business rules evolve, and edge cases emerge over time — without ongoing maintenance, agent quality degrades within months.

Where to Go From Here

The failure rates aren't inevitable. They're a consequence of predictable mistakes that are entirely avoidable if you approach AI agent development with discipline instead of hype.

The pattern that works is straightforward: start small, validate fast, build incrementally. Don't bet $80K on an assumption you haven't tested. Don't skip evaluation because you're excited to ship. Don't forget that the agent needs care and feeding after launch.

If you're at the stage where you're considering an AI agent project — or trying to figure out why your current one is stalled — the lowest-risk next step is a focused proof of concept. Test the hard part first. Our APEX Proof gives you a working prototype against your actual use case in 5 days, starting from $2K. It's designed to give you a clear answer before you commit serious resources.

Whether you work with us or not, don't skip that validation step. The companies that failed wish they hadn't.

How about to rate this article?

2 ratings • Avg 5 / 5

Written by: