Explore Latest Insights

Trending Articles

Artificial Intelligence

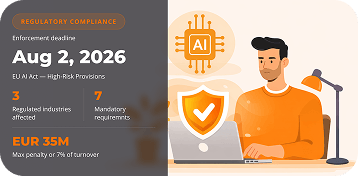

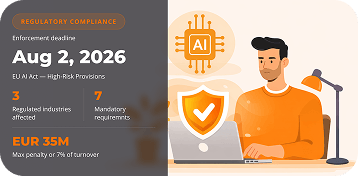

EU AI Act high-risk provisions enforce August 2, 2026 — penalties up to EUR 35M or 7% of turnover. Actionable compliance checklist and 6-month roadmap for insurance, fintech, and healthcare AI…

17 March 2026 • 23 min read

Artificial Intelligence

Why Most AI Agent Projects Fail (And How to Be the Exception)

Over 40% of agentic AI projects will be canceled by 2027 (Gartner). Here are the 7 failure patterns we see repeatedly — and a practical framework to prevent every one of them.

12 March 2026 • 22 min read

Artificial Intelligence

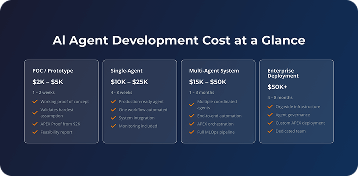

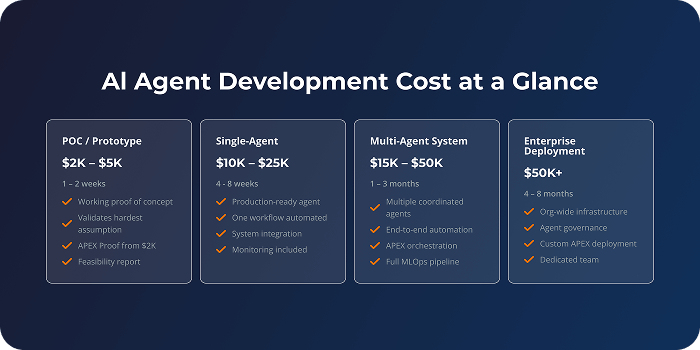

How Much Does AI Agent Development Cost in 2026? Complete Pricing Breakdown

Complete AI agent development cost breakdown for 2026. POC from $2K, production from $5K. Real pricing from 100+ projects, hourly rates, hidden costs, and ROI data by industry.

10 March 2026 • 18 min read

Artificial Intelligence

How to Build an AI Agent: Complete Step-by-Step Guide for 2026

An AI agent is a software system that uses large language models to autonomously perceive its environment, make decisions, and execute actions toward specific goals using tools and APIs. Unlike…

10 February 2026 • 49 min read

Artificial Intelligence

Top AI Development Companies: Complete Evaluation Guide for 2026

AI development companies build custom artificial intelligence systems for businesses. They create machine learning models, computer vision tools, natural language processing applications, and…

03 February 2026 • 69 min read

Artificial Intelligence

How to Measure ROI from AI Projects: KPIs, Frameworks & Templates

ROI from AI projects measures whether the value you gained exceeds what you invested. It compares the financial returns and business benefits generated by an AI initiative against its total costs.

06 January 2026 • 39 min read

Web Development

Top 10 Web Development Companies – Find the Right Partner for Your Business

The web development landscape in 2025 looks nothing like it did just three years ago. AI is no longer a tag line, it's baked into how websites think, respond, and personalize experiences in real…

11 November 2025 • 22 min read

Want to know more? — Subscribe

Artificial Intelligence

Artificial Intelligence

How Much Does AI Agent Development Cost in 2026? Complete Pricing Breakdown

Complete AI agent development cost breakdown for 2026. POC from $2K, production from $5K. Real pricing from 100+ projects, hourly rates, hidden costs, and ROI data by industry.

10 March 2026 • 18 min read

Artificial Intelligence

Why Most AI Agent Projects Fail (And How to Be the Exception)

Over 40% of agentic AI projects will be canceled by 2027 (Gartner). Here are the 7 failure patterns we see repeatedly — and a practical framework to prevent every one of them.

12 March 2026 • 22 min read

Artificial Intelligence

EU AI Act high-risk provisions enforce August 2, 2026 — penalties up to EUR 35M or 7% of turnover. Actionable compliance checklist and 6-month roadmap for insurance, fintech, and healthcare AI…

17 March 2026 • 23 min read

Artificial Intelligence

How to Implement AI in Your Business: A Strategic Guide for 2025

Do you have a mobile app idea you'd like to come true? Our first recommendation: don't rush. As our experience working with startups suggests, lots of such projects want to jump from a concept…

24 June 2025 • 21 min read

Web Development

![Web Application Development Cost in 2025 [A Step-by-step Guide]](/assets/uploads/blog/20251030/cover-big.png)

![Web Application Development Cost in 2025 [A Step-by-step Guide]](/assets/uploads/blog/20251030/cover-small.png)

Web Development

Web Application Development Cost in 2025 [A Step-by-step Guide]

The web development market is booming – valued at $74.69 billion in 2025 and set to reach $104.31 billion by 2030. Even more impressive? The progressive web app market is exploding at a 31% growth…

30 October 2025 • 40 min read

![How Much Does It Cost to Build a Website [Full Pricing Guide]](/assets/uploads/blog/20251104/cover-small.png)

Web Development

How Much Does It Cost to Build a Website [Full Pricing Guide]

Building a website in 2025? You're not alone. Every 3 seconds, a new website goes live on the internet. But here's what most business owners discover the hard way: that "simple $500 website"…

04 November 2025 • 56 min read

Web Development

Top 10 Web Development Companies – Find the Right Partner for Your Business

The web development landscape in 2025 looks nothing like it did just three years ago. AI is no longer a tag line, it's baked into how websites think, respond, and personalize experiences in real…

11 November 2025 • 22 min read

Mobile Development

Mobile Development

How Much Does It Cost to Build an App in 2025

Mobile apps are now part of our daily lives. From shopping online to booking a doctor's appointment, almost everything can be done through an app. Mobile apps are projected to reach 299 billion…

28 August 2025 • 37 min read

Mobile Development

Top 10 Mobile Application Development Companies in 2025

Your business needs a mobile app. You've known it for months, maybe longer. Your competitors have one. Your customers keep asking for it. Every day you wait, you're leaving money on the table.

07 November 2025 • 18 min read

Software Development

Software Development

Top 10 Software Development Companies in 2025: Your Guide to Finding the Perfect Tech Partner

The web development landscape in 2025 looks nothing like it did just three years ago. AI is no longer a tag line, it's baked into how websites think, respond, and personalize experiences in real…

14 November 2025 • 18 min read

Software Development

Discovery Phase in Software Development: Steps, Deliverables, Benefits

Here's a sobering fact: 70% of software projects fail or miss their targets. Most don't fail because of bad code—they fail because teams build the wrong thing, run out of budget halfway through,…

25 November 2025 • 31 min read

Software Development

Top IT Staff Augmentation Companies in 2026

You need developers, but hiring takes forever. Your project deadline is approaching, and your team is stretched thin. Sound familiar?

27 November 2025 • 16 min read

Data Practices

Data Practices

How Much Does Data Analytics Cost

Companies no longer rely on gut instinct; instead, they are increasingly turning to data-driven decision-making for competitive insights from their information assets. No wonder that data analytics…

03 April 2024 • 16 min read

Data Practices

On-Premise to Cloud Migration: Ultimate Guide

The progression of digital infrastructure signifies a notable milestone in the way companies operate. This transformation is characterized by on-premise to cloud migration. Businesses face challenges…

27 March 2024 • 14 min read

Data Practices

Large Language Models (LLMs) Use Cases in Diverse Domains

Large Language Models use cases like ChatGPT have rapidly become one of the most talked-about technologies in artificial intelligence. These neural network models demonstrated capabilities in…

13 March 2024 • 17 min read

Data Practices

How to Reduce AWS Costs: Proven Strategies and Best Practices

AWS cost optimization is about maximizing the ROI in the cloud. The company offers over 200 fully-featured services from data centers globally, often on a pay-as-you-go basis.

06 March 2024 • 23 min read

eCommerce

eCommerce Apps

Ecommerce App Development 101: A Step-by-Step Tutorial

eCommerce mobile app usage is increasing these days incrementally, especially after COVID-19. If you're an online shop owner and want to expand your business, you need to consider ecommerce application…

08 May 2022 • 20 min read

eCommerce Trends

Top 9 E-commerce Technology Trends in 2024

E-commerce technology trends in 2024 promise innovative developments that will further transform the online shopping and selling experience. The industry has experienced explosive growth over…

27 December 2023 • 23 min read

Website

eCommerce Platform Development: Ultimate Guide for 2023

If you own a business selling products or services online and wonder how to make it more successful, these eCommerce development best practices are solutions to apply.

01 June 2022 • 26 min read

eCommerce Trends

Data Analytics in E-Commerce: Unlocking Business Potential

Data analytics in e-commerce transforms how businesses operate and interact with their customers. It examines, cleans, transforms, and models data to discover useful information. Companies gain…

06 December 2023 • 20 min read

Fintech

Fintech Apps

How to Develop a Fintech App from Scratch: Features & Costs

Fintech affects global industries by storm and revolutionizes the ways business is conducted. Broadly speaking, fintech means a set of technologies aimed to improve financial management and facilitate…

04 March 2022 • 23 min read

![How to Build Your Own Cryptocurrency Exchange Platform [Softermii's Guide]](/assets/uploads/blog/20220316/cover-small.png)

Blockchain

How to Build Your Own Cryptocurrency Exchange Platform [Softermii's Guide]

Creating a cryptocurrency exchange requires a good understanding of the industry, market trends, and legal regulations. The entire cryptocurrency market is almost completely online, available…

15 December 2022 • 26 min read

Blockchain

How to Develop Blockchain Applications Step-by-Step

Are you thinking about blockchain application development but not sure where to start? Are you overwhelmed with the technical complexity and high costs of this process? You're not alone! Many…

19 May 2023 • 16 min read

Blockchain

Crypto Payment Gateway Development: Ultimate Guide

Bitcoin and Ethereum have gained mainstream traction, and more organizations accept them as payments. Cryptocurrency payment gateway development empowers companies to capitalize on this growing…

20 March 2024 • 14 min read

Telecommunication

Event

Video Conferencing Software Development Guide: Types, Features & Cost

Video conferencing software development is at its peak. Platforms like Zoom, MS Teams, and Google Meet dominate the market. Discord has seen an increase in daily active users.

27 July 2023 • 25 min read

Social Media Apps

Live Streaming Shopping App Development: Cost, Tech Stack, and Benefits

Today, we can all agree on one undeniable truth: the pandemic has irrevocably changed the way we shop. It's no surprise that online shopping is at the center of emerging trends. The e-commerce…

03 December 2022 • 17 min read

Social Media Apps

How to Create a Social Media App

Social media apps have drastically changed our day-to-day communication. Now you can share meaningful ideas on Twitter, express yourself on TikTok, and find new friends on Facebook. And this…

07 September 2022 • 20 min read

Audio Apps

How To Build a Music Streaming App: A Comprehensive Guide

Statista indicates that the general revenue in the music streaming app sector is projected to reach $33.7 billion by 2025. What does it promise to you? One thing only! It is an amazing opportunity…

18 March 2022 • 26 min read

Real Estate

Real Estate Apps

Property Management Software Development for Real Estate

Despite higher mortgage rates and an increase in housing supply, home prices have continued to surge – the numbers still show the market is quite resilient, and costly. According to Forbes, the…

12 August 2022 • 30 min read

Real Estate Trends

Blockchain Technology in Real Estate: Use Cases & Challenges

Have you heard about the benefits of adopting blockchain in the real estate industry? Or do you still believe this technology won’t be of any use to traditional businesses?

05 December 2022 • 16 min read

Real Estate Websites

Real Estate Website Development: Must-Have Features, Costs & Timeframes

If you are going into your own real estate website development or you have an existing one, you’re probably already aware that having a first-rate website is of the utmost importance for business.…

16 September 2022 • 24 min read

Real Estate Trends

17 Real Estate Technology & Property Trends for 2024

While real estate technology trends have historically lagged, momentum is now clearly accelerating. This industry, globally valued at over $3.9 trillion, stands at the precipice of a new era.…

24 January 2024 • 21 min read

Healthcare

Healthcare Software

Healthcare Software Development: The Ultimate Guide

Investing time and effort into healthcare software development is both honorable and profitable. In 2022, the global digital health market was worth an estimated $334 billion, according to Statista.…

03 May 2023 • 18 min read

Telemedicine

The Cost of Telehealth Implementation: Is It a Worthy Investment?

Telemedicine continues to be one of the fastest-growing trends in the aftermath of the COVID-19 pandemic. The pandemic has proved telemedicine to be extremely useful. Reaching its peak during…

06 October 2023 • 16 min read

Healthcare Trends

Hyper-Personalized Medicine Explained

Digital transformation is everywhere, and healthcare is not an exception to this rule. Although we're accustomed to perceiving this trend in process optimization and communication facilities,…

07 September 2021 • 7 min read

Healthcare Trends

13 IT Trends in Healthcare to Watch in 2023

Emerging technologies in healthcare have significantly influenced the adaptations and transformations in the industry. Healthcare IT trends have become pivotal in 2023, highlighting the advancements…

29 December 2023 • 27 min read

Find out more about our software development opportunities

Don’t Dream for Success, Let Us Make It Real

Tell us what you’re building. We’ll tell you how fast we can ship it — and what it’ll cost.

-

Los Angeles, USA

10828 Fruitland Dr. Studio City, CA 91604

-

Austin, USA

701 Brazos St, Austin, TX 78701

-

-

Tel Aviv, IL

31, Rothschild Blvd

-

Warsaw, PL

Przeskok 2

-

London, UK

6, The Marlins, Northwood

-

Munich, DE

3, Stahlgruberring

-

Vienna, AT

Palmersstraße 6-8, 2351 Wiener Neudorf

-

Kyiv, Ukraine

154, Borshchagivska Street